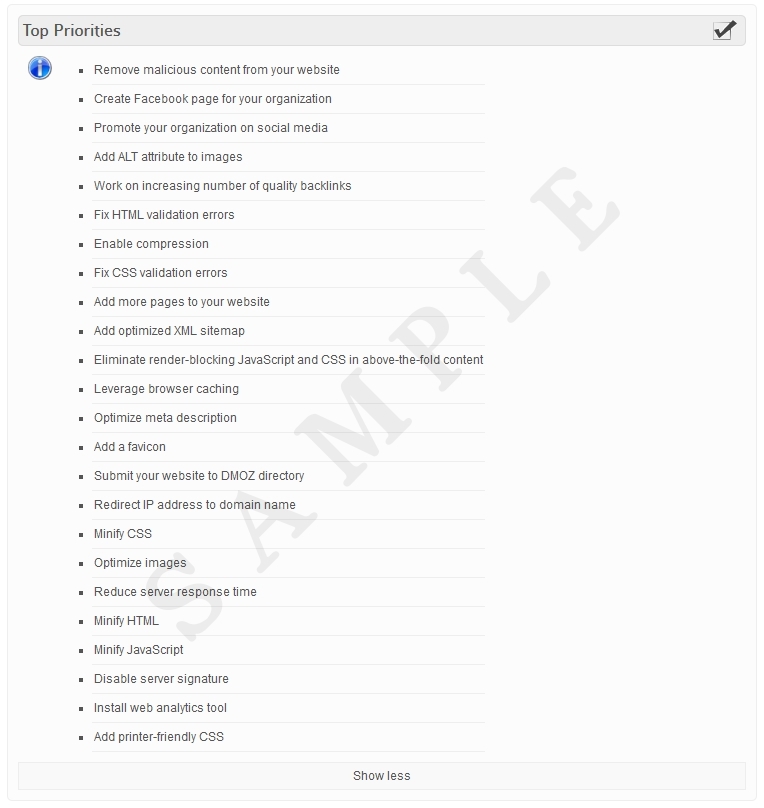

In early December 2017, Google started displaying longer description snippets on Search Engine Result Pages (SERP) for certain search queries. Length of the description snippet seems to doubled from 160 to 320 characters. Description snippet is a short block of text that shows under each result link that is derived from a description meta tag on a page or in some cases auto-generated based on page content.

There is no official character limit on description meta tag but until recently all major search engines were limiting their description snippets up to 150 – 160 characters. Although Google announced in 2009 that “description” meta tag is not utilized in ranking algorithms having a short, informative and to the point, meta description that does not “cut off” mid-sentence on SERP can result in an increase of click-through rates.

Google recommends that in general, you should not need to modify meta descriptions on your pages. During “English Google Webmaster Central office-hours hangout”, Webmaster Trends Analyst John Mueller provided a little more feedback:

Few things to consider:

- Google often experiments with snippet length and the limit can change again.

- Test search queries from google search console and review on SERP. If you prefer different (longer) description, go for it.

- It is not guaranteed that your description will be shown on SERP. Google sometimes displays its own version of description snippet based on how well the query matches the content on the page.

- Other search engines such as Bing, Yahoo, Baidu, DuckDuckGo, etc. currently limit their description snippets to approximately 160 characters.

As a result of this change, our Description Meta Tag Checker tool will now warn users if the page description is longer than 320 instead 160 characters.

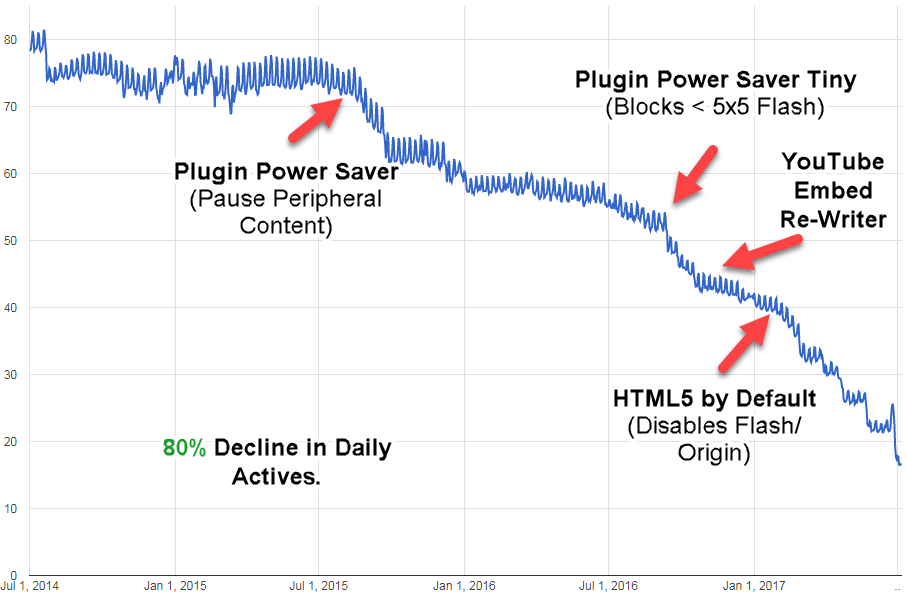

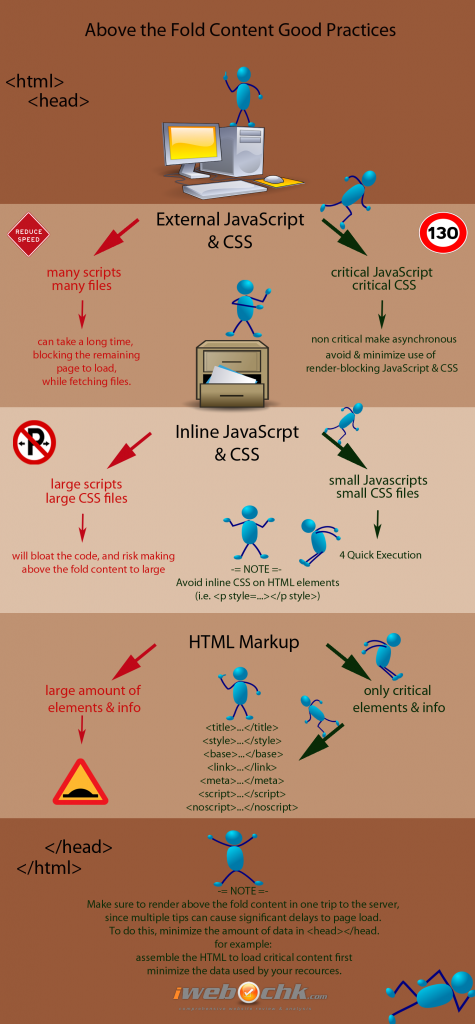

Eliminating the render-blocking JavaScript and CSS in above-the-fold (<head> </head>) content is good practice to increase page speed. Before a browser can display all page content, it must load all the styles (CSS), layout (JavaScript), and Html markup for that page first.Therefore, the content on the page is blocked, until all external style-sheets are downloaded.

Eliminating the render-blocking JavaScript and CSS in above-the-fold (<head> </head>) content is good practice to increase page speed. Before a browser can display all page content, it must load all the styles (CSS), layout (JavaScript), and Html markup for that page first.Therefore, the content on the page is blocked, until all external style-sheets are downloaded.